Blog Article

Zapier just made a decision that will reshape how talent acquisition teams think about hiring: they're requiring AI fluency for every single new hire, regardless of role. This is not a deep technical expertise in prompt engineering, but demonstrated curiosity, strategic thinking about how these tools amplify work, and a builder's mindset. Within six months, their internal usage jumped from 89% to 97%, and now they're systematizing that fluency directly into their hiring funnel.

This represents a fundamental recalibration of what baseline capability for knowledge work looks like in 2026. When a rigorous hiring organization starts evaluating every candidate through an AI fluency lens, it's worth asking: what does this framework measure, and should your organization adopt something similar?

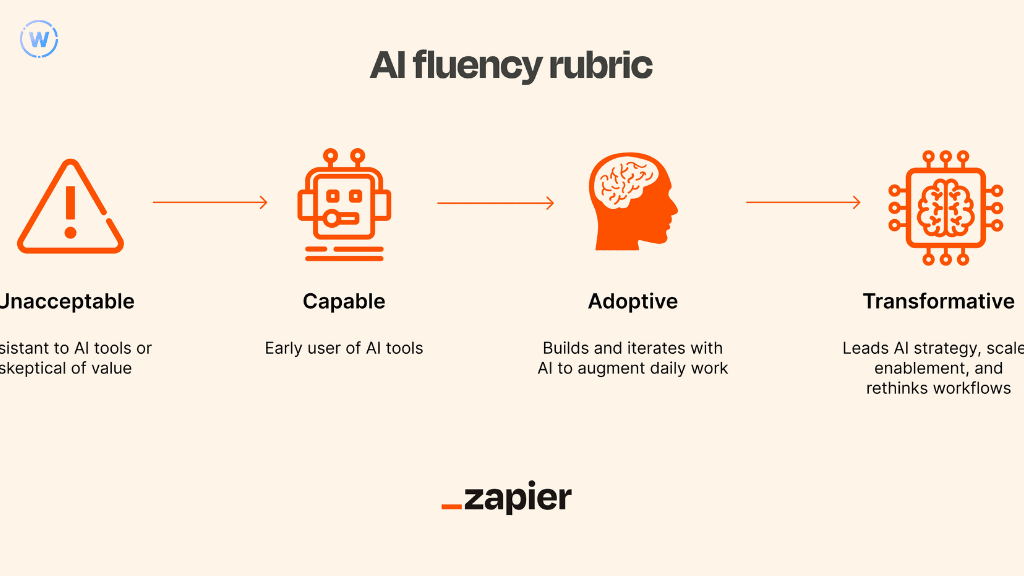

Understanding Zapier's 4-Level AI Fluency Framework

Zapier maps AI capability across four distinct levels and what they aim to achieve with this isn't whether someone can explain transformer architectures but the ability to demonstrate the mindset and practical skills to leverage these tools for meaningful work outcomes.

Unacceptable: Resistant to these tools or skeptical of their value, showing an unwillingness to experiment. Active resistance signals a gap that creates friction as the organization moves forward.

Capable: Early user who has purposefully applied one or more tools for personal or professional goals, past theoretical understanding and into practical application. This is the minimum bar Zapier expects from every new hire.

Adoptive: Builds and iterates with these tools to augment daily work, able to clearly explain how they improve outcomes. This represents genuine workflow integration where the technology has become part of problem-solving.

Transformative: Leads strategy, scales enablement, and fundamentally rethinks workflows with an AI-first approach. This person shapes how others use them and identifies opportunities for systematic process redesign.

The genius is what it doesn't require, no expectation that marketers need to code or that finance analysts build custom models. Instead, it evaluates mindset, demonstrated application, and strategic thinking. For talent acquisition leaders thinking about how AI fluency in HR manifests in their teams, this distinction between technical depth and practical fluency clarifies what to actually screen for during hiring.

How Zapier Measures AI Fluency Across the Hiring Funnel

What separates Zapier's approach from generic platitudes is systematic measurement at four specific moments, creating multiple data points that compound into a clear signal.

During the application stage, candidates answer an optional question about current usage. These responses help the TA team quickly highlight standout candidates without excluding anyone.

The initial screen includes questions like "How do you currently use AI in your daily work?" revealing whether someone thinks about these tools as curiosities or as practical amplifiers.

Skills assessments vary by role but evaluate mindset and tool usage in real contexts. For engineering roles, this might mean demonstrating problem-solving with AI interview tools. For operations roles, it might involve designing a workflow using talent operations automation. The tests reveal how candidates think when given access to capabilities that didn't exist three years ago.

The executive interview assesses strategic thinking and confirms the candidate meets minimum fluency expectations. By this stage, hiring managers can determine whether someone sees these tools as tactical gains or as strategic enablers.

This multi-stage approach prevents any single interview from carrying too much weight. For teams building integrated recruiting tools that need to evaluate fluency consistently, Zapier's model offers a blueprint for systematic assessment.

What This Framework Reveals About Future Hiring Standards

Zapier's decision to make AI fluency a baseline requirement shows that the gap between organizations that systematically build this capability and those that treat it as optional is widening fast.

When you require demonstrated curiosity and a builder's mindset, you're selecting for people who don't wait for perfect instructions, who experiment when existing approaches create friction, and who think about leverage rather than just task completion. Someone at the "Capable" level can adapt as new tools emerge because they've already built the muscle of learning by doing.

The framework also addresses a challenge many CHROs face: how do you evaluate capability when best practices are still being written? Zapier's answer to this is to measure mindset and application rather than technical depth, and role-specific context rather than universal standards.

The question isn't whether these tools will become table stakes, they already are. The question is whether you systematize that expectation now, when it's still differentiating, or wait until you're playing catch-up. Teams already tracking this through their talent operations dashboard see clear patterns: candidates with demonstrated fluency onboard faster, contribute more quickly, and show higher engagement.

Implementing an AI Fluency Framework in Your Hiring Process

Define what each level means for your most common roles. An "Adoptive" level engineer looks different from an "Adoptive" designer, but both should demonstrate iterative experimentation, outcome orientation, and the ability to explain how tools improve their work.

Build assessment moments into your existing funnel. If you're already doing technical assessments, modify them to include a component where candidates can use AI recruitment tools and explain their approach. The goal is signal accumulation across touchpoints.

Train your interviewers on what fluency actually looks like. Many hiring managers will confuse "uses ChatGPT occasionally" with genuine fluency. Provide concrete examples, and calibrate across your interview team.

Be transparent with candidates about what you're evaluating. Zapier provides AI training materials so everyone has an opportunity to meet their fluency bar. This isn't about gotcha questions; it's about clear expectations.

The Broader Implications for Talent Acquisition

Zapier's framework signals a shift in what "qualified" means for knowledge work. The proxies we've used for decades including education credentials, years of experience are breaking down as adaptability, learning velocity, and comfort with ambiguity become more important than static knowledge.

If fluency with rapidly evolving tools becomes a baseline expectation, your hiring process itself needs to model that fluency. Organizations that require AI fluency from candidates but operate hiring processes that ignore these efficiencies send a mixed message.

Perhaps most significantly, requiring AI fluency addresses the gap between what organizations say they value and what they actually reward. Zapier's framework makes the implicit explicit, if we believe these tools are transformative, we should build our hiring process around finding people who demonstrate comfort with them.

FAQs

What's the minimum level of AI fluency most organizations should require from new hires?

The "Capable" level is emerging as the practical baseline for most knowledge work roles, someone who has purposefully used these tools and can articulate the value they provide. For technical roles, many organizations are moving toward the "Adoptive" level. The specific bar depends on your industry and the pace at which your competitors are moving, but making these capabilities passive is increasingly difficult to accommodate.

How do we evaluate AI fluency without introducing bias against candidates who haven't had access to premium tools?

Focus your evaluation on mindset and strategic thinking rather than familiarity with specific platforms. The best candidates often demonstrate fluency through creative use of freely available options and showing curiosity about capabilities. Provide clear guidance before interviews about what you're evaluating, and design assessments that allow candidates to explain their thinking process. The goal is screening for adaptability.

Should we require the same AI fluency level across all roles, or customize by function?

Customize the specific application while maintaining a consistent baseline around mindset. The "Capable" level should probably be universal, but what "Adoptive" or "Transformative" looks like will vary significantly by role. Define role-specific examples so your hiring managers can evaluate consistently without expecting every function to demonstrate fluency in identical ways.

Related Articles